“If you’re searching, filtering, or aggregating over large volumes of semi-structured data, Elasticsearch is probably the right tool. If you need ACID transactions, it’s definitely the wrong one.”

Elasticsearch appears in almost every system design interview that involves search — product search, log analytics, autocomplete, geospatial queries, or real-time dashboards. This article covers what you need to know: how it works under the hood, how to design around its strengths and weaknesses, and the tradeoffs that come up in interviews.

What is Elasticsearch?

Elasticsearch is a distributed, RESTful search and analytics engine built on top of Apache Lucene. It stores JSON documents, indexes them into inverted indexes, and provides fast full-text search, structured queries, and aggregations.

Core properties:

- Schema-free (but schema-aware via mappings)

- Near real-time search (~1 second delay after indexing)

- Horizontally scalable via sharding

- High availability via replication

- REST API — every operation is an HTTP request

The Inverted Index: Foundation of Search

The inverted index is the data structure that makes Elasticsearch fast. Instead of scanning every document for a term, you look up the term and instantly get the list of documents containing it.

How Text Analysis Works

When a document is indexed, each text field goes through an analyzer pipeline:

"The Quick Brown Fox Jumps!"

↓ Character Filter (strip HTML, etc.)

"The Quick Brown Fox Jumps"

↓ Tokenizer (split on whitespace/punctuation)

["The", "Quick", "Brown", "Fox", "Jumps"]

↓ Token Filters (lowercase, stemming, stop words)

["quick", "brown", "fox", "jump"]The same analyzer runs on the search query, so “Quick FOX” becomes ["quick", "fox"] — matching the indexed terms.

// Custom analyzer example

PUT /products

{

"settings": {

"analysis": {

"analyzer": {

"product_analyzer": {

"type": "custom",

"tokenizer": "standard",

"filter": ["lowercase", "english_stemmer", "english_stop"]

}

},

"filter": {

"english_stemmer": { "type": "stemmer", "language": "english" },

"english_stop": { "type": "stop", "stopwords": "_english_" }

}

}

}

}BM25: The Relevance Algorithm

Elasticsearch uses BM25 (Best Matching 25) to score documents. Two key factors:

- Term Frequency (TF) — how often the term appears in the document (more = higher score, with diminishing returns)

- Inverse Document Frequency (IDF) — how rare the term is across all documents (rarer = higher score)

score(q, d) = Σ IDF(t) × [tf(t,d) × (k1 + 1)] / [tf(t,d) + k1 × (1 - b + b × |d| / avgdl)]In interviews, you don’t need the formula — just know: rare terms matching frequently in short documents score highest.

Cluster Architecture

An Elasticsearch cluster is a collection of nodes, each serving one or more roles.

Node Roles

| Role | Purpose | Resource Profile |

|---|---|---|

| Master-eligible | Cluster state management, index creation/deletion, shard allocation | Low CPU/memory, high stability |

| Data | Stores shards, executes queries and aggregations | High memory, high disk I/O |

| Coordinating | Routes requests, merges results from shards | Moderate CPU/memory |

| Ingest | Pre-processing pipeline (transforms before indexing) | Moderate CPU |

| ML | Machine learning jobs | High CPU/memory |

Interview tip: In a production cluster, always have dedicated master nodes (typically 3) to prevent the cluster state from being affected by heavy data operations.

# elasticsearch.yml — dedicated master node

node.master: true

node.data: false

node.ingest: false

# elasticsearch.yml — dedicated data node

node.master: false

node.data: true

node.ingest: falseShards: The Unit of Scale

An index is split into shards, each being a self-contained Lucene index.

// Create index with 5 primary shards and 1 replica

PUT /products

{

"settings": {

"number_of_shards": 5,

"number_of_replicas": 1

}

}Shard routing formula:

shard_number = hash(_routing) % number_of_primary_shardsBy default, _routing = _id. This means the number of primary shards is fixed at index creation — you can’t change it later without reindexing.

Shard sizing guidelines:

- Target 10-50 GB per shard (sweet spot for most workloads)

- Each shard has overhead (~500MB heap), so don’t over-shard

- Too few shards = can’t scale writes; too many = overhead and slow queries

- Rule of thumb: number of shards = ceil(expected_data_size / 30GB)

Write Path: How Documents Get Indexed

Understanding the write path is critical for interview discussions about consistency and latency.

Step-by-Step Write Flow

Key concepts:

-

Translog (Transaction Log): Write-ahead log for durability. Every write is appended to the translog before acknowledgment. If the node crashes before flush, the translog replays on restart.

-

Refresh: Every 1 second (configurable), the in-memory buffer is written to a new Lucene segment. This is when the document becomes searchable. This is why ES is “near real-time,” not real-time.

-

Flush: Periodically, all in-memory segments are fsynced to disk and the translog is cleared. This is the Lucene “commit” operation.

-

Merge: Background process that combines small segments into larger ones. Reduces the number of segments to search and reclaims space from deleted documents.

// Force refresh (make recent writes searchable immediately)

POST /products/_refresh

// Adjust refresh interval (e.g., for bulk indexing)

PUT /products/_settings

{

"index.refresh_interval": "30s"

}

// Disable refresh during bulk load, re-enable after

PUT /products/_settings { "index.refresh_interval": "-1" }

// ... bulk index ...

PUT /products/_settings { "index.refresh_interval": "1s" }Write Consistency

ES uses a primary-backup model:

- Write hits the primary shard

- Primary replicates to all in-sync replica shards in parallel

- Write is acknowledged only after primary + all in-sync replicas confirm

You can control this with the wait_for_active_shards parameter:

// Wait for all shards (primary + replicas) before acknowledging

PUT /products/_doc/1?wait_for_active_shards=all

{ "name": "laptop" }

// Wait for just the primary (faster, less durable)

PUT /products/_doc/1?wait_for_active_shards=1

{ "name": "laptop" }Read Path: How Search Works

Scatter-Gather Pattern

Every search query follows the scatter-gather (two-phase) pattern:

Query Phase:

- Coordinating node receives the search request

- Forwards to one copy (primary or replica) of every relevant shard

- Each shard runs the query locally, returns only doc IDs + scores (top N)

- Coordinating node merges results into a global top-N list

Fetch Phase: 5. Coordinating node requests full documents for only the final top-N results 6. Returns complete JSON response to the client

// Search with explanation of scoring

GET /products/_search

{

"query": {

"bool": {

"must": [

{ "match": { "title": "wireless headphones" } }

],

"filter": [

{ "term": { "category": "electronics" } },

{ "range": { "price": { "lte": 100 } } }

]

}

},

"sort": [

{ "_score": "desc" },

{ "created_at": "desc" }

],

"from": 0,

"size": 20

}Interview insight: The filter context is critical for performance. Filters don’t calculate relevance scores and their results are cached in a bitset. Always put exact-match conditions (category, status, price range) in filter, not must.

Deep Pagination Problem

// Page 1: fast

GET /products/_search { "from": 0, "size": 20 }

// Page 500: SLOW — each shard must return 10,000 results

GET /products/_search { "from": 9980, "size": 20 }Each shard must produce from + size results, the coordinator merges num_shards × (from + size) results. At deep offsets, this is extremely expensive.

Solutions:

// Solution 1: search_after (recommended for deep pagination)

// First page

GET /products/_search

{

"size": 20,

"sort": [{ "created_at": "desc" }, { "_id": "asc" }]

}

// Next page — use the last document's sort values

GET /products/_search

{

"size": 20,

"sort": [{ "created_at": "desc" }, { "_id": "asc" }],

"search_after": ["2026-03-20T10:00:00", "abc123"]

}

// Solution 2: scroll API (for processing all results, e.g., export)

POST /products/_search?scroll=5m

{ "size": 1000, "query": { "match_all": {} } }

// Then: POST /_search/scroll { "scroll": "5m", "scroll_id": "..." }

// Solution 3: Point-in-time + search_after (best for concurrent readers)

POST /products/_pit?keep_alive=5m

// Returns: { "id": "pit_id_here" }

GET /_search

{

"pit": { "id": "pit_id_here", "keep_alive": "5m" },

"size": 20,

"sort": [{ "created_at": "desc" }],

"search_after": [...]

}Mappings: Schema Design

Mappings define how fields are indexed and stored. Getting mappings right is essential for performance.

PUT /products

{

"mappings": {

"properties": {

"title": { "type": "text", "analyzer": "english" },

"title_exact": { "type": "keyword" },

"description": { "type": "text" },

"price": { "type": "float" },

"category": { "type": "keyword" },

"tags": { "type": "keyword" },

"created_at": { "type": "date" },

"location": { "type": "geo_point" },

"in_stock": { "type": "boolean" },

"metadata": { "type": "object", "enabled": false }

}

}

}text vs keyword

text |

keyword |

|

|---|---|---|

| Analyzed | Yes (tokenized, lowercased, stemmed) | No (stored as-is) |

| Use for | Full-text search | Exact match, sorting, aggregations |

| Example | Product description | Category, status, email |

| Query | match, multi_match |

term, terms, range |

Common pattern: Map the same field as both:

{

"title": {

"type": "text",

"fields": {

"raw": { "type": "keyword" }

}

}

}

// Search on "title", sort/aggregate on "title.raw"Dynamic Mapping Pitfalls

By default, ES auto-creates mappings for new fields. This is dangerous in production:

// Disable dynamic mapping to prevent mapping explosion

PUT /products

{

"mappings": {

"dynamic": "strict",

"properties": { ... }

}

}

// "strict" = reject docs with unmapped fields

// "false" = accept docs but don't index unmapped fields

// "true" = auto-map everything (default, risky)Aggregations: Analytics at Scale

Aggregations run alongside search queries to compute summaries over matched documents.

GET /orders/_search

{

"size": 0,

"query": {

"range": { "created_at": { "gte": "2026-01-01" } }

},

"aggs": {

"revenue_by_category": {

"terms": { "field": "category", "size": 20 },

"aggs": {

"total_revenue": { "sum": { "field": "amount" } },

"avg_order_value": { "avg": { "field": "amount" } }

}

},

"monthly_trend": {

"date_histogram": {

"field": "created_at",

"calendar_interval": "month"

},

"aggs": {

"revenue": { "sum": { "field": "amount" } }

}

}

}

}Three aggregation types:

| Type | Purpose | Example |

|---|---|---|

| Bucket | Group documents | terms, date_histogram, range, filters |

| Metric | Compute values | sum, avg, min, max, cardinality |

| Pipeline | Aggregate over other aggs | moving_avg, derivative, cumulative_sum |

Performance tip: Aggregations use doc_values (column-oriented on-disk data structure) by default for keyword and numeric fields. Never aggregate on text fields — use a keyword sub-field.

Common System Design Patterns

1. Product Search (E-commerce)

Key design decisions:

- Source of truth is the primary database, not ES

- Use CDC (Change Data Capture) or event-driven indexing to keep ES in sync

- Fetch full product details from the primary DB by ID after getting search results

- Use

filtercontext for faceted navigation (category, brand, price range)

2. Log Analytics (ELK Stack)

Index strategy for logs:

- Use time-based indices:

logs-2026.03.21 - Configure Index Lifecycle Management (ILM) to automatically:

- Hot → Warm → Cold → Delete

- Roll over when index hits 50GB or 30 days

// ILM policy

PUT _ilm/policy/logs_policy

{

"policy": {

"phases": {

"hot": { "actions": { "rollover": { "max_size": "50gb", "max_age": "1d" } } },

"warm": { "min_age": "7d", "actions": { "shrink": { "number_of_shards": 1 }, "forcemerge": { "max_num_segments": 1 } } },

"cold": { "min_age": "30d", "actions": { "freeze": {} } },

"delete": { "min_age": "90d", "actions": { "delete": {} } }

}

}

}3. Autocomplete / Search-as-You-Type

// Mapping with completion suggester

PUT /products

{

"mappings": {

"properties": {

"suggest": {

"type": "completion"

},

"title": {

"type": "search_as_you_type"

}

}

}

}

// Index with suggestions

PUT /products/_doc/1

{

"title": "Apple MacBook Pro 16-inch",

"suggest": {

"input": ["Apple MacBook Pro", "MacBook Pro 16", "laptop"],

"weight": 10

}

}

// Autocomplete query (extremely fast — uses FST, not inverted index)

GET /products/_search

{

"suggest": {

"product_suggest": {

"prefix": "mac",

"completion": {

"field": "suggest",

"size": 5,

"fuzzy": { "fuzziness": 1 }

}

}

}

}4. Geospatial Search

// Find restaurants within 5km

GET /restaurants/_search

{

"query": {

"bool": {

"must": { "match": { "cuisine": "italian" } },

"filter": {

"geo_distance": {

"distance": "5km",

"location": { "lat": 40.7128, "lon": -74.0060 }

}

}

}

},

"sort": [

{

"_geo_distance": {

"location": { "lat": 40.7128, "lon": -74.0060 },

"order": "asc",

"unit": "km"

}

}

]

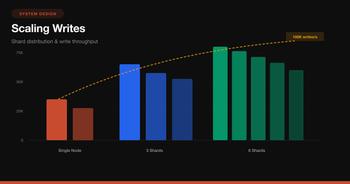

}Scaling Strategies

Horizontal Scaling Decision Matrix

Reindexing Without Downtime

You can’t change the number of primary shards or modify certain mapping properties on a live index. The solution is index aliases:

// Initial setup

PUT /products_v1 { ... }

POST /_aliases { "actions": [{ "add": { "index": "products_v1", "alias": "products" } }] }

// Application always queries "products" alias

// When you need to reindex:

PUT /products_v2 { ... } // new settings/mappings

POST /_reindex { "source": { "index": "products_v1" }, "dest": { "index": "products_v2" } }

// Atomic swap

POST /_aliases {

"actions": [

{ "remove": { "index": "products_v1", "alias": "products" } },

{ "add": { "index": "products_v2", "alias": "products" } }

]

}Performance Optimization Checklist

Indexing Performance

// Bulk API — always use for batch indexing

POST /_bulk

{"index":{"_index":"products","_id":"1"}}

{"title":"Laptop","price":999}

{"index":{"_index":"products","_id":"2"}}

{"title":"Phone","price":699}

// Optimal bulk size: 5-15 MB per request- Use bulk API — never index one document at a time

- Increase refresh interval to 30s during bulk loads

- Disable replicas during initial load, re-enable after

- Use

_routingto co-locate related documents on the same shard

Query Performance

- Use

filterovermustfor exact matches — filters are cached and skip scoring - Avoid wildcards at the start of terms (

*phoneis slow;phone*is fine) - Limit

from + sizeto under 10,000 — usesearch_afterfor deeper pagination - Use

_sourcefiltering to return only needed fields:

GET /products/_search

{

"_source": ["title", "price", "category"],

"query": { "match": { "title": "laptop" } }

}- Pre-warm field data for frequently aggregated fields

- Avoid scripts in queries when possible — use runtime fields or indexed fields instead

Cluster Health

// Check cluster health

GET /_cluster/health

// green = all shards allocated

// yellow = primary shards OK, some replicas unassigned

// red = some primary shards unassigned (data loss risk!)

// Check shard allocation

GET /_cat/shards?v&h=index,shard,prirep,state,node

// Check node stats

GET /_nodes/stats/jvm,os,fsElasticsearch vs Alternatives

| Feature | Elasticsearch | Apache Solr | Typesense | Meilisearch |

|---|---|---|---|---|

| Built on | Lucene | Lucene | Custom C++ | Custom Rust |

| Query language | Query DSL (JSON) | Solr Query / JSON | Simple API | Simple API |

| Scaling | Automatic shard rebalancing | Manual shard management | Built-in raft | Single node (HA planned) |

| Aggregations | Very powerful | Powerful | Basic | Basic |

| Typo tolerance | Manual (fuzzy) | Manual | Built-in | Built-in |

| Operational complexity | High | High | Low | Very low |

| Best for | Large-scale search + analytics | Enterprise search | Developer-friendly search | Small-medium search |

Interview Cheat Sheet

When to Use Elasticsearch

- Full-text search across large document collections

- Log/event analytics with aggregations

- Autocomplete and search-as-you-type

- Faceted navigation (e-commerce filters)

- Geospatial queries

- Real-time dashboards over time-series data

When NOT to Use Elasticsearch

- Primary data store — ES is not ACID, data loss is possible

- Frequent updates to the same document — each update = delete + reindex

- Strong consistency requirements — ES is eventually consistent (near real-time)

- Transactions — no multi-document transactions

- Small datasets — overhead isn’t worth it under ~100K documents

- Simple key-value lookups — use Redis or a database

Key Numbers to Know

| Metric | Typical Value |

|---|---|

| Indexing to searchable | ~1 second (refresh interval) |

| Search latency (simple) | 5-50 ms |

| Search latency (complex aggs) | 50-500 ms |

| Shard size sweet spot | 10-50 GB |

Max from + size |

10,000 (default) |

| Heap recommendation | 50% of RAM, max 31 GB |

| Shards per node | < 600 recommended |

Interview Answer Template

When an interviewer asks you to design a search feature:

- Source of truth — primary DB (PostgreSQL/MySQL), not ES

- Sync mechanism — CDC via Kafka/Debezium, or application-level dual writes

- Index design — define mappings, analyzers, shard count based on data size

- Query design —

boolquery withmustfor relevance,filterfor exact matches - Pagination —

search_afterfor deep pagination, neverfrom> 10,000 - Scaling — replicas for read throughput, time-based indices for logs, ILM for lifecycle

- Failure handling — what happens if ES is down? Degrade gracefully, queue writes for replay

Wrapping Up

Elasticsearch is a powerful tool, but it’s not a database replacement. It’s a secondary index — a read-optimized, denormalized view of your data that enables search and analytics capabilities that traditional databases can’t match.

The mental model for system design interviews:

- Write path: App → Primary DB → Event/CDC → ES (async, eventually consistent)

- Read path: App → ES for search/filter/aggregate → Primary DB for full record (if needed)

- Scaling: Shards for write parallelism, replicas for read throughput, ILM for data lifecycle

- Tradeoff: Near real-time (not real-time), eventually consistent (not strongly consistent), optimized for reads (not writes)

Master these concepts and you’ll be able to confidently design any search-heavy system in an interview.