“Kafka is not a message queue. It’s a distributed commit log that happens to be useful as a message queue, event store, and stream processor.”

Apache Kafka appears in nearly every system design interview involving event-driven architecture, real-time data pipelines, or service decoupling. It’s the backbone of architectures at LinkedIn, Uber, Netflix, and Airbnb — processing trillions of messages per day.

This article covers what you need for interviews: how Kafka works internally, the tradeoffs it makes, and the design patterns built on top of it.

What is Kafka?

Kafka is a distributed, partitioned, replicated append-only commit log. That single sentence encodes everything important about its architecture:

- Distributed — runs across a cluster of brokers

- Partitioned — topics are split into ordered partitions for parallelism

- Replicated — each partition is copied to multiple brokers for fault tolerance

- Append-only — messages are immutable once written; consumers track their position via offsets

- Commit log — not a queue; messages aren’t deleted after consumption

Core Architecture

Key Components

| Component | Role |

|---|---|

| Broker | A single Kafka server. Stores partitions, serves reads/writes. |

| Topic | A named stream of records (like a database table). |

| Partition | An ordered, immutable sequence of records within a topic. The unit of parallelism. |

| Offset | A sequential ID for each record within a partition. Consumers track their position via offsets. |

| Producer | Publishes records to topics. |

| Consumer | Reads records from topics by pulling. |

| Consumer Group | A set of consumers that cooperatively consume a topic. Each partition is assigned to exactly one consumer in the group. |

| Controller | One broker elected to manage partition leader election and cluster metadata (replaced by KRaft in newer versions). |

Topics and Partitions

A topic is split into partitions — each partition is an ordered, append-only log stored on disk.

Topic: orders (3 partitions)

Partition 0: [msg0] [msg1] [msg2] [msg3] [msg4] [msg5] → newest

Partition 1: [msg0] [msg1] [msg2] [msg3] → newest

Partition 2: [msg0] [msg1] [msg2] [msg3] [msg4] → newest

↑ offsetKey properties:

- Ordering is guaranteed only within a partition, not across partitions

- Messages are immutable — you can’t update or delete a record in place

- Messages are retained by time or size, not consumed-and-deleted like traditional queues

- Each consumer tracks its own offset — it can re-read old messages by resetting its offset

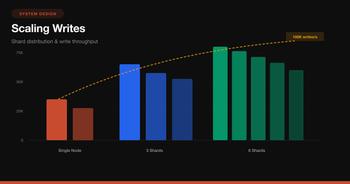

Partition Count: The Most Important Design Decision

Topic parallelism = min(partition_count, consumer_count_in_group)- Too few partitions → consumers sit idle, can’t scale throughput

- Too many partitions → more leader elections, more memory, higher end-to-end latency, more open file handles

- You can increase partitions later but NEVER decrease them — and increasing breaks key-based ordering guarantees for existing data

Rule of thumb: Start with max(expected_throughput_MB/s / 10, expected_consumer_count) and round up. For most systems, 6–30 partitions is a reasonable starting point.

How Producers Choose a Partition

// Default: hash the key, mod by partition count

int partition = Math.abs(key.hashCode()) % numPartitions;

// No key? Round-robin (or sticky partitioning in newer clients)

// Custom partitioner example:

public class GeoPartitioner implements Partitioner {

public int partition(String topic, Object key, byte[] keyBytes,

Object value, byte[] valueBytes, Cluster cluster) {

String region = extractRegion((String) key);

return switch (region) {

case "US" -> 0;

case "EU" -> 1;

case "APAC" -> 2;

default -> 3;

};

}

}Interview insight: If you need ordering for a specific entity (e.g., all events for user:1001), use the entity ID as the message key. All messages with the same key go to the same partition → guaranteed order.

Producer Internals

Write Path

Durability: The acks Setting

This is the most important producer config and a frequent interview topic:

acks |

Behavior | Durability | Latency |

|---|---|---|---|

0 |

Fire and forget. Don’t wait for any acknowledgment. | Lowest — messages can be lost | Fastest |

1 |

Wait for the partition leader to write to its local log. | Medium — lost if leader dies before replication | Medium |

all (-1) |

Wait for all in-sync replicas (ISR) to acknowledge. | Highest — survives any single broker failure | Slowest |

from confluent_kafka import Producer

producer = Producer({

'bootstrap.servers': 'kafka1:9092,kafka2:9092,kafka3:9092',

'acks': 'all', # strongest durability

'enable.idempotence': True, # prevent duplicates on retry

'max.in.flight.requests.per.connection': 5,

'retries': 2147483647, # retry forever

'linger.ms': 5, # batch for 5ms to improve throughput

'batch.size': 65536, # 64KB batch

'compression.type': 'lz4', # compress batches

})

def delivery_callback(err, msg):

if err:

print(f'Delivery failed: {err}')

else:

print(f'Delivered to {msg.topic()} [{msg.partition()}] @ {msg.offset()}')

producer.produce(

topic='orders',

key='user:1001',

value='{"orderId":"abc","amount":99.99}',

callback=delivery_callback

)

producer.flush() # wait for all messages to be deliveredBatching and Compression

Producers batch messages per partition before sending:

batch.size— max bytes per batch (default 16KB, increase to 64-256KB for throughput)linger.ms— wait this long to fill the batch (default 0, set to 5-20ms)compression.type— compress each batch (lz4for speed,zstdfor ratio)

Batching + compression can 10x your throughput at the cost of a few milliseconds of latency.

Consumer Internals

Consumer Groups: The Scaling Model

Every consumer belongs to a consumer group. Kafka ensures:

- Each partition is consumed by exactly one consumer in a group

- If consumers > partitions → some consumers sit idle

- If consumers < partitions → some consumers handle multiple partitions

- If a consumer dies → its partitions are rebalanced to other consumers in the group

from confluent_kafka import Consumer

consumer = Consumer({

'bootstrap.servers': 'kafka1:9092',

'group.id': 'order-processor',

'auto.offset.reset': 'earliest', # start from beginning if no committed offset

'enable.auto.commit': False, # manual commit for at-least-once

'max.poll.interval.ms': 300000, # 5 min max between polls

'session.timeout.ms': 45000,

})

consumer.subscribe(['orders'])

try:

while True:

msg = consumer.poll(timeout=1.0)

if msg is None:

continue

if msg.error():

print(f'Error: {msg.error()}')

continue

# Process the message

process_order(msg.key(), msg.value())

# Commit offset AFTER successful processing (at-least-once)

consumer.commit(msg)

finally:

consumer.close()Offset Management

Offsets are how consumers track what they’ve consumed. Three strategies:

| Strategy | How | Guarantee |

|---|---|---|

| Auto-commit | enable.auto.commit=true, commits every auto.commit.interval.ms |

At-most-once (can skip messages on crash) |

| Manual commit after processing | consumer.commit() after each message/batch |

At-least-once (may reprocess on crash) |

| Exactly-once | Transactional producer + read_committed consumer |

Exactly-once (complex setup) |

# At-least-once: commit after processing a batch

messages = consumer.consume(num_messages=100, timeout=5.0)

for msg in messages:

process(msg)

consumer.commit() # commit the batch

# Seeking to a specific offset (replay)

from confluent_kafka import TopicPartition

consumer.assign([TopicPartition('orders', partition=0, offset=0)]) # replay from startRebalancing

When a consumer joins, leaves, or crashes, Kafka rebalances — reassigning partitions across the remaining consumers. This causes a brief pause in consumption.

Rebalancing strategies:

- Eager (default before 2.4) — revoke all partitions, reassign everything. Causes a full stop-the-world.

- Cooperative/Incremental (2.4+) — only reassign affected partitions. Much less disruption.

consumer = Consumer({

# ...

'partition.assignment.strategy': 'cooperative-sticky', # incremental rebalance

})Interview tip: If asked “how do you avoid rebalancing storms?”, the answer is: use cooperative-sticky assignment, tune session.timeout.ms and max.poll.interval.ms appropriately, and ensure consumers process fast enough to poll within the interval.

Replication and Fault Tolerance

Every partition has one leader and N-1 followers. All reads and writes go through the leader. Followers pull from the leader to stay in sync.

In-Sync Replicas (ISR)

The ISR is the set of replicas that are “caught up” with the leader. A follower drops out of the ISR if it falls behind by more than replica.lag.time.max.ms (default 30s).

Partition 0: Leader=Broker1, ISR=[Broker1, Broker2, Broker3]

Broker3 falls behind →

Partition 0: Leader=Broker1, ISR=[Broker1, Broker2]

Broker1 dies →

Partition 0: Leader=Broker2, ISR=[Broker2] (Broker2 elected new leader)

Broker1 recovers, catches up →

Partition 0: Leader=Broker2, ISR=[Broker2, Broker1]Durability Guarantees

The combination of acks, min.insync.replicas, and replication.factor determines your durability:

# Topic-level config for strong durability

replication.factor=3

min.insync.replicas=2

# Producer config

acks=allThis means: a write succeeds only when at least 2 of 3 replicas acknowledge. The cluster can tolerate 1 broker failure without data loss and without blocking writes.

| Config | Tolerates | Behavior |

|---|---|---|

RF=3, min.isr=2, acks=all |

1 broker failure | Recommended for production |

RF=3, min.isr=1, acks=all |

2 broker failures (reads), 0 for writes if 2 die | Higher availability, lower durability |

RF=3, min.isr=2, acks=1 |

Leader failure only if data replicated in time | Fast but risky |

Retention and Compaction

Kafka doesn’t delete messages after consumption. Instead, it uses retention policies:

Time/Size-Based Retention

# Keep messages for 7 days (default)

retention.ms=604800000

# Or keep up to 100GB per partition

retention.bytes=107374182400

# Segment size — Kafka breaks each partition into segment files

segment.bytes=1073741824 # 1GB segmentsLog Compaction

For compacted topics, Kafka keeps only the latest value for each key. Older values are garbage collected.

cleanup.policy=compact

Key: user:1001 → {"name":"Alice","v":1} ← deleted during compaction

Key: user:1002 → {"name":"Bob","v":1} ← kept (latest for this key)

Key: user:1001 → {"name":"Alice","v":2} ← kept (latest for this key)

Key: user:1002 → null ← tombstone: delete this keyUse cases for compacted topics:

- Changelog/CDC — latest state of each database row

- Configuration — latest config for each service

- Cache rebuilding — replay the topic to rebuild a cache from scratch

Exactly-Once Semantics (EOS)

Kafka supports exactly-once through two mechanisms:

1. Idempotent Producer

producer = Producer({

'enable.idempotence': True, # assigns a producer ID + sequence numbers

# Kafka deduplicates retried messages using (producerId, sequenceNumber)

})This prevents duplicates from producer retries within a single session.

2. Transactions (Cross-Partition EOS)

producer.initTransactions();

try {

producer.beginTransaction();

// Produce to multiple partitions atomically

producer.send(new ProducerRecord<>("orders", "key1", "value1"));

producer.send(new ProducerRecord<>("payments", "key2", "value2"));

// Commit consumer offsets as part of the transaction

producer.sendOffsetsToTransaction(offsets, consumerGroupMetadata);

producer.commitTransaction();

} catch (Exception e) {

producer.abortTransaction();

}Consumers must be configured to only read committed messages:

consumer = Consumer({

'isolation.level': 'read_committed', # skip uncommitted/aborted transactions

})Interview insight: Exactly-once is about the consume-transform-produce loop being atomic. It doesn’t magically make external side effects (HTTP calls, DB writes) idempotent — you still need application-level deduplication for those.

Common System Design Patterns

1. Event-Driven Microservices

Why Kafka over direct HTTP calls:

- Decoupling — Order Service doesn’t know (or care) about downstream consumers

- Resilience — if Payment Service is down, events queue up; no data loss

- Replay — new services can replay history from the topic

- Fan-out — multiple consumer groups independently process the same events

2. Change Data Capture (CDC)

# Debezium connector config (Kafka Connect)

{

"connector.class": "io.debezium.connector.postgresql.PostgresConnector",

"database.hostname": "postgres",

"database.port": "5432",

"database.dbname": "orders_db",

"table.include.list": "public.orders,public.customers",

"topic.prefix": "cdc",

# Produces to: cdc.public.orders, cdc.public.customers

"plugin.name": "pgoutput"

}CDC captures every INSERT/UPDATE/DELETE from the database WAL and publishes it to Kafka. Downstream consumers can:

- Keep Elasticsearch in sync (search indexing)

- Update a cache (Redis)

- Replicate to a data warehouse

- Feed real-time dashboards

3. Log Aggregation

# Application logs → Kafka → multiple sinks

# Typically: App → Fluentd/Filebeat → Kafka → Consumers

# Simple Python log producer

import logging

from confluent_kafka import Producer

class KafkaLogHandler(logging.Handler):

def __init__(self, producer, topic):

super().__init__()

self.producer = producer

self.topic = topic

def emit(self, record):

self.producer.produce(

self.topic,

key=record.name,

value=self.format(record)

)

logger = logging.getLogger('myapp')

logger.addHandler(KafkaLogHandler(producer, 'app-logs'))4. Event Sourcing

Store the full history of state changes as events:

# Instead of updating a row, append events

events = [

{"type": "OrderCreated", "orderId": "abc", "items": [...], "ts": 1679000001},

{"type": "PaymentReceived", "orderId": "abc", "amount": 99.99, "ts": 1679000050},

{"type": "OrderShipped", "orderId": "abc", "trackingId": "...", "ts": 1679000100},

]

# Use compacted topic keyed by orderId

# Replay events to reconstruct current state

for event in events:

producer.produce('order-events', key=event['orderId'], value=json.dumps(event))5. CQRS (Command Query Responsibility Segregation)

Writes → Command Service → Kafka → Event Store

→ Read Model Builder → Optimized Read DB

Reads → Query Service → Read DB (Elasticsearch, Redis, Materialized View)Kafka Connect and Kafka Streams

Kafka Connect

Pre-built connectors for moving data in/out of Kafka without writing code:

// Source connector: PostgreSQL → Kafka

{

"name": "postgres-source",

"config": {

"connector.class": "io.debezium.connector.postgresql.PostgresConnector",

"database.hostname": "db.example.com",

"database.dbname": "mydb",

"topic.prefix": "mydb"

}

}

// Sink connector: Kafka → Elasticsearch

{

"name": "elasticsearch-sink",

"config": {

"connector.class": "io.confluent.connect.elasticsearch.ElasticsearchSinkConnector",

"topics": "mydb.public.products",

"connection.url": "http://elasticsearch:9200",

"type.name": "_doc",

"key.ignore": false

}

}Kafka Streams

A lightweight stream processing library (no separate cluster needed):

StreamsBuilder builder = new StreamsBuilder();

// Read from orders topic

KStream<String, Order> orders = builder.stream("orders");

// Filter, transform, aggregate

KTable<String, Long> orderCounts = orders

.filter((key, order) -> order.getAmount() > 100)

.groupBy((key, order) -> order.getRegion())

.count();

// Write results to a new topic

orderCounts.toStream().to("order-counts-by-region");

KafkaStreams streams = new KafkaStreams(builder.build(), config);

streams.start();Schema Management

Use Schema Registry (Confluent) with Avro, Protobuf, or JSON Schema to enforce contracts:

from confluent_kafka.schema_registry import SchemaRegistryClient

from confluent_kafka.schema_registry.avro import AvroSerializer

schema_str = """

{

"type": "record",

"name": "Order",

"fields": [

{"name": "orderId", "type": "string"},

{"name": "userId", "type": "string"},

{"name": "amount", "type": "double"},

{"name": "createdAt", "type": "long", "logicalType": "timestamp-millis"}

]

}

"""

schema_registry = SchemaRegistryClient({'url': 'http://schema-registry:8081'})

avro_serializer = AvroSerializer(schema_registry, schema_str)

producer.produce(

'orders',

key='order:abc',

value=avro_serializer(order_dict, SerializationContext('orders', MessageField.VALUE))

)Schema evolution rules (backward/forward/full compatibility):

- Backward (default) — new schema can read old data. Safe to add fields with defaults.

- Forward — old schema can read new data. Safe to remove fields with defaults.

- Full — both. Only add/remove optional fields with defaults.

Performance Tuning

Throughput Optimization

| Parameter | Default | Tuned | Impact |

|---|---|---|---|

batch.size (producer) |

16KB | 64-256KB | Fewer requests, higher throughput |

linger.ms (producer) |

0 | 5-20ms | Allow batching |

compression.type |

none | lz4 / zstd | Smaller payloads, less network I/O |

fetch.min.bytes (consumer) |

1 | 65536 | Fewer fetch requests |

fetch.max.wait.ms (consumer) |

500 | 500-1000 | Batch fetches |

num.io.threads (broker) |

8 | cores × 2 | More parallel I/O |

num.network.threads (broker) |

3 | cores | More parallel network handling |

Latency Optimization

For low-latency use cases (< 10ms end-to-end):

producer = Producer({

'linger.ms': 0, # send immediately

'batch.size': 0, # don't batch

'acks': 1, # don't wait for replicas

'compression.type': 'none',

})The tradeoff: throughput vs latency. You can’t maximize both.

Kafka vs Alternatives

| Feature | Kafka | RabbitMQ | Amazon SQS | Pulsar | Redpanda |

|---|---|---|---|---|---|

| Model | Distributed log | Message broker | Managed queue | Distributed log | Distributed log |

| Ordering | Per-partition | Per-queue | FIFO queues only | Per-partition | Per-partition |

| Retention | Configurable (days/size) | Until consumed | 14 days max | Tiered storage | Configurable |

| Replay | Yes (seek by offset) | No (consumed = deleted) | No | Yes | Yes |

| Consumer model | Pull | Push | Pull | Pull | Pull (Kafka API) |

| Throughput | Millions msg/s | ~50K msg/s | ~3K msg/s per queue | Millions msg/s | Millions msg/s |

| Exactly-once | Yes (transactions) | No (at-least-once) | No (at-least-once) | Yes | Yes |

| Operational complexity | High (ZK/KRaft, brokers, SR) | Medium | None (managed) | High | Low (no JVM/ZK) |

When to use Kafka: High-throughput event streaming, CDC, event sourcing, log aggregation, connecting many producers and consumers.

When NOT to use Kafka: Simple task queues (use RabbitMQ/SQS), request-reply patterns, very low message volumes (< 1K/s), sub-millisecond latency requirements.

Interview Cheat Sheet

Key Numbers

| Metric | Typical Value |

|---|---|

| Throughput (single partition) | ~10 MB/s write, ~30 MB/s read |

| Throughput (cluster) | GB/s+ with enough partitions |

| Latency (p99, acks=1) | 2-5 ms |

| Latency (p99, acks=all) | 5-15 ms |

| Partition count sweet spot | 6-30 per topic |

| Replication factor | 3 (production standard) |

| Retention default | 7 days |

| Max message size default | 1 MB |

| Consumer group rebalance | 1-30 seconds |

Interview Answer Template

When designing an event-driven system:

- Why Kafka? — need decoupling, event replay, high throughput, multiple consumers

- Topic design — one topic per event type (e.g.,

orders,payments,shipments) - Partitioning strategy — partition key = entity ID for ordering (e.g.,

userId,orderId) - Partition count — based on expected throughput and consumer parallelism

- Replication —

RF=3, min.insync.replicas=2, acks=allfor production durability - Consumer groups — one group per downstream service; each scales independently

- Delivery guarantee — at-least-once by default; exactly-once if consume-transform-produce

- Schema management — Avro + Schema Registry with backward compatibility

- Failure handling — dead letter topic for poison messages, idempotent consumers

- Monitoring — consumer lag, under-replicated partitions, ISR shrink rate

Common Interview Questions

Q: How do you handle message ordering? A: Use the entity ID as the partition key. All events for that entity go to the same partition, which guarantees ordering. Cross-entity ordering requires a single partition (limits throughput).

Q: What happens when a consumer crashes?

A: The consumer group coordinator detects the missing heartbeat after session.timeout.ms, triggers a rebalance, and assigns that consumer’s partitions to other consumers in the group. Any uncommitted messages are reprocessed (at-least-once).

Q: How do you handle a poison message (bad format/unprocessable)? A: Catch the error, publish the message to a dead letter topic (DLT) with error metadata, commit the offset, and continue. A separate process inspects and resolves DLT messages.

try:

process(msg)

consumer.commit(msg)

except Exception as e:

producer.produce(

'orders.dead-letter',

key=msg.key(),

value=msg.value(),

headers=[('error', str(e)), ('original-topic', 'orders')]

)

consumer.commit(msg) # skip the poison messageQ: How do you scale consumers? A: Add more consumers to the consumer group (up to the partition count). If you need more parallelism, increase the partition count — but be aware this may break key-based ordering for in-flight data.

Wrapping Up

Kafka’s power comes from a simple insight: an append-only log is the most general-purpose data structure for distributed systems. It can be a queue, an event store, a database changelog, or a stream processor — depending on how you consume it.

The mental model for interviews:

- Producers write to partition leaders; partitioning determines parallelism and ordering

- Consumers pull at their own pace, tracking position via offsets

- Consumer groups enable scaling and fault tolerance through partition assignment

- Replication (ISR) provides durability;

acks=all+min.insync.replicas=2is the production standard - Retention keeps data available for replay; compacted topics keep latest-per-key

- The tradeoff triangle: throughput vs latency vs durability — pick two, tune the third

If you understand partitions, consumer groups, and the acks + ISR durability model, you can design any Kafka-based system in an interview.